A fund manager posts three consecutive quarters of strong returns and attracts a flood of new investment, despite having a mediocre ten-year record. A sports commentator declares a player in “the form of their life” after a strong month, discounting a career of inconsistency. A traveler who experienced a single flight delay swears off a reliable airline permanently. A person who got food poisoning once from a restaurant refuses to return, even years later when all the staff have turned over. In each case, the most recent information is doing far more than its proportional share of the analytical work, nudging judgment away from the longer record and toward the most accessible data point: the one that just happened.

This is recency bias, one of the most pervasive and consequential of the cognitive shortcuts that govern human decision-making, and it is not a quirk of certain personality types or a failure of intelligence. It is a systematic feature of how memory and prediction interact in the human brain, with roots that reach down into the architecture of the hippocampus, the predictive coding system, and the dopaminergic learning circuits that update beliefs in response to new information. Understanding why the brain is built this way, and what that means for the quality of judgments in a world that rewards long-horizon thinking, is the subject of this first article in the series on decision-making and judgment.

Contents

The Memory Architecture Behind Recency

The recency bias finds its first neurological home in the structure of memory itself. As established in the Memory Systems series earlier in this collection, episodic memory traces are not equally accessible at the point of retrieval. Memories that are more recently encoded have had less time to consolidate into stable cortical representations and remain more dependent on hippocampal activation for retrieval. They are, in a quite literal sense, closer to the surface: less deeply embedded in the distributed cortical network of long-term memory, and therefore more readily activated by the kinds of partial cues that retrieval typically involves.

When the brain is asked to assess a pattern, evaluate a situation, or form a judgment, it draws on available memory in proportion to retrieval ease. Recent events, being easier to retrieve, contribute disproportionately to the constructed picture. Older events, more deeply consolidated but less immediately accessible, require more deliberate retrieval effort to bring into the assessment and are consequently underweighted unless a person is specifically motivated to seek them out. This is not lazy thinking; it is the predictable output of a retrieval system that operates on accessibility rather than proportional representation.

The Serial Position Effect

Experimental memory research has long documented a related phenomenon called the serial position effect: when people are asked to recall a list of items they have learned in sequence, they tend to remember the items at the beginning and the end of the list better than those in the middle. The primacy effect, superior recall of early items, reflects the consolidation advantage of items that had longer to transfer from working memory into longer-term storage. The recency effect, superior recall of the last few items, reflects their continued presence in working memory at the time of recall.

In real-world judgment, this translates into a systematic underweighting of the middle ground: the long, consistent middle of a performance record that is neither the attention-grabbing early history nor the vivid recent performance. A sequence of events, an investment history, a person’s behavior across years, is filtered through a retrieval system that bends toward both ends of the timeline, with the recent end having additional advantages because it feeds directly into the predictive models the brain maintains for the near future.

Predictive Coding and the Update Problem

Recency bias is not purely a storage and retrieval phenomenon. It is also a feature of how the brain’s predictive coding system updates its models in response to new evidence. As discussed in the filtering article earlier in this series, the brain operates as an anticipation machine, continuously generating predictions about what should happen next and allocating processing resources disproportionately to prediction errors, the gaps between expectation and reality.

When new information arrives that deviates from the current model, the predictive coding system flags it as a high-priority signal and initiates a model update. The strength of this update is modulated by the dopaminergic reward prediction error signal: larger prediction errors generate larger dopamine responses, driving more substantial belief revision. This is a powerful and generally well-calibrated learning mechanism for environments where the most recent observation is genuinely the most informative, as it often is when tracking a rapidly changing physical environment or learning a new skill.

Where it misfires is in environments characterized by noisy, high-variance data where the long-run base rate is more informative than any single recent observation. A fund’s quarterly performance is noisy. A restaurant’s quality on a single visit is noisy. A person’s behavior on a specific day is noisy. In each case, the prediction error signal generated by a surprising recent observation can drive a belief update that is disproportionate to the actual informational value of that observation, because the system was designed for physical environments where surprising events tend to reflect genuine changes rather than statistical noise.

The Hot Hand and the Gambler’s Fallacy: Two Faces of the Same Bias

Recency bias manifests in two apparently opposite cognitive errors that share the same underlying mechanism. The hot hand fallacy is the belief that a person or system currently performing well is more likely to continue performing well, based primarily on the recent streak. Basketball fans believe that a player who has made their last five shots is more likely to make the next one, despite extensive statistical evidence that shot-making in basketball is largely independent across attempts. Investors pile into funds that have recently outperformed, despite the well-replicated finding that past performance predicts future performance only weakly over short horizons.

The gambler’s fallacy is the mirror image: the belief that after a streak of one outcome, the opposite outcome becomes more likely, as if the system is due for a correction. After five heads in a row, the gambler believes a tail is now more probable, forgetting that a fair coin has no memory. Both errors reflect the brain’s attempt to find meaningful patterns in recent data and project them forward, with the hot hand bias extrapolating continuation and the gambler’s fallacy extrapolating correction. The shared root is the overweighting of the recent sequence relative to the underlying statistical properties of the process being observed.

The Availability Heuristic and Vividness

Nobel laureate Daniel Kahneman and his longtime research collaborator Amos Tversky identified and named a closely related cognitive tendency that amplifies recency bias: the availability heuristic. When estimating the probability or frequency of something, people tend to rely on how easily examples come to mind rather than on statistical base rates. Recent events are more available, but so are vivid, emotionally salient, or personally experienced events, even if they occurred long ago.

The combination of recency and vividness creates particularly powerful distortions. A plane crash reported extensively in the media generates a spike in perceived air travel risk that persists for weeks afterward, even though the statistical risk of flying is essentially unchanged. A neighborhood crime reported in the local news elevates a resident’s perception of their personal risk far beyond what the actual crime rate would justify. In each case, the vivid, recent, emotionally salient event is dominating a probabilistic assessment that should be anchored to base rates accumulated across much longer time spans.

Recency Bias in Financial Decision-Making

Few domains reveal the practical cost of recency bias more clearly than financial decision-making. The disposition effect, the well-documented tendency to sell winning positions too early and hold losing positions too long, partly reflects recency bias: the recent pain of a loss is vivid and motivating in ways that the less salient longer-term record is not. Investors who poured money into technology stocks at the peak of the late 1990s boom were responding to a multi-year uptrend that had recently been spectacular, while discounting centuries of financial history showing that no asset class sustains extreme valuations indefinitely. Those who sold equities in the immediate aftermath of the 2008 financial crisis were responding to a vivid, recent, terrifying event rather than to the full historical record of market recoveries.

Each of these decisions felt rational in the moment, because the recent data was real and its emotional impact was undeniable. The problem was not the data itself but the weight it was assigned relative to the broader evidence base, a weight determined less by its statistical relevance than by its vividness and accessibility.

Correcting for Recency: Deliberate Strategies

Understanding the neural mechanisms behind recency bias suggests several deliberate strategies for improving the quality of judgments it distorts. The most straightforward is explicitly seeking out the base rate: the historical frequency of the relevant outcome across a longer time horizon than the recent data covers. Before concluding that a fund manager is skilled based on recent performance, asking what fraction of fund managers outperform their benchmark over ten years by chance alone reframes the recent quarterly results in their proper statistical context.

A second strategy is creating deliberate friction between an emotionally vivid recent event and a consequential decision it might otherwise drive. Behavioral economists call this a cooling-off period: requiring a waiting period between an emotionally impactful event and any decision it might motivate reduces the influence of the event’s vividness on the resulting judgment, allowing the more deliberate system of evidence evaluation to engage. This is the institutional wisdom behind rules against making major investment decisions in the immediate aftermath of market volatility.

Pre-commitment to decision rules established before vivid recent events occur is perhaps the most powerful counter to recency bias in high-stakes domains. An investor who has committed in advance to a rebalancing schedule and asset allocation strategy has reduced the influence of recent market movements on portfolio decisions by design. A hiring committee that commits to evaluation criteria before reviewing candidates reduces the influence of the most recent candidate’s performance on assessments of earlier ones.

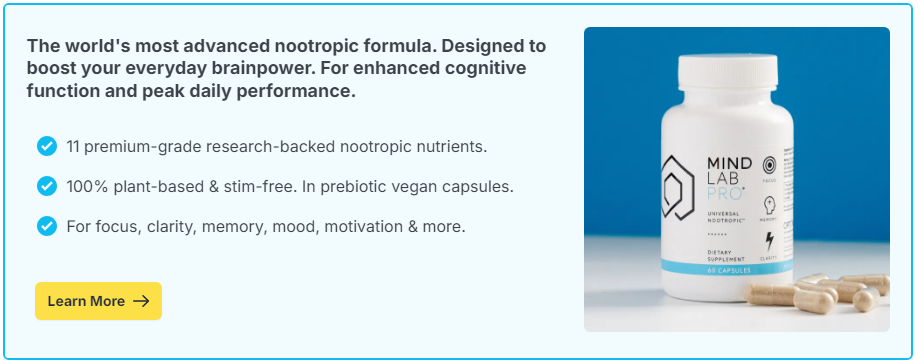

Supporting the prefrontal systems that perform this kind of deliberate evidence integration, moderating the automatic salience-weighting of the emotional and memory systems, is one reason cognitive health investment matters for decision quality. The brain that sleeps well, manages stress effectively, and maintains robust prefrontal executive function is better equipped to override the default pull of the recent and vivid in favor of the statistically relevant and long-run accurate. Targeted nootropic support for prefrontal function is, in this context, not merely an investment in memory or focus but an investment in the quality of the judgments that shape consequential outcomes across a lifetime.